Software Architecture¶

Task Diagram¶

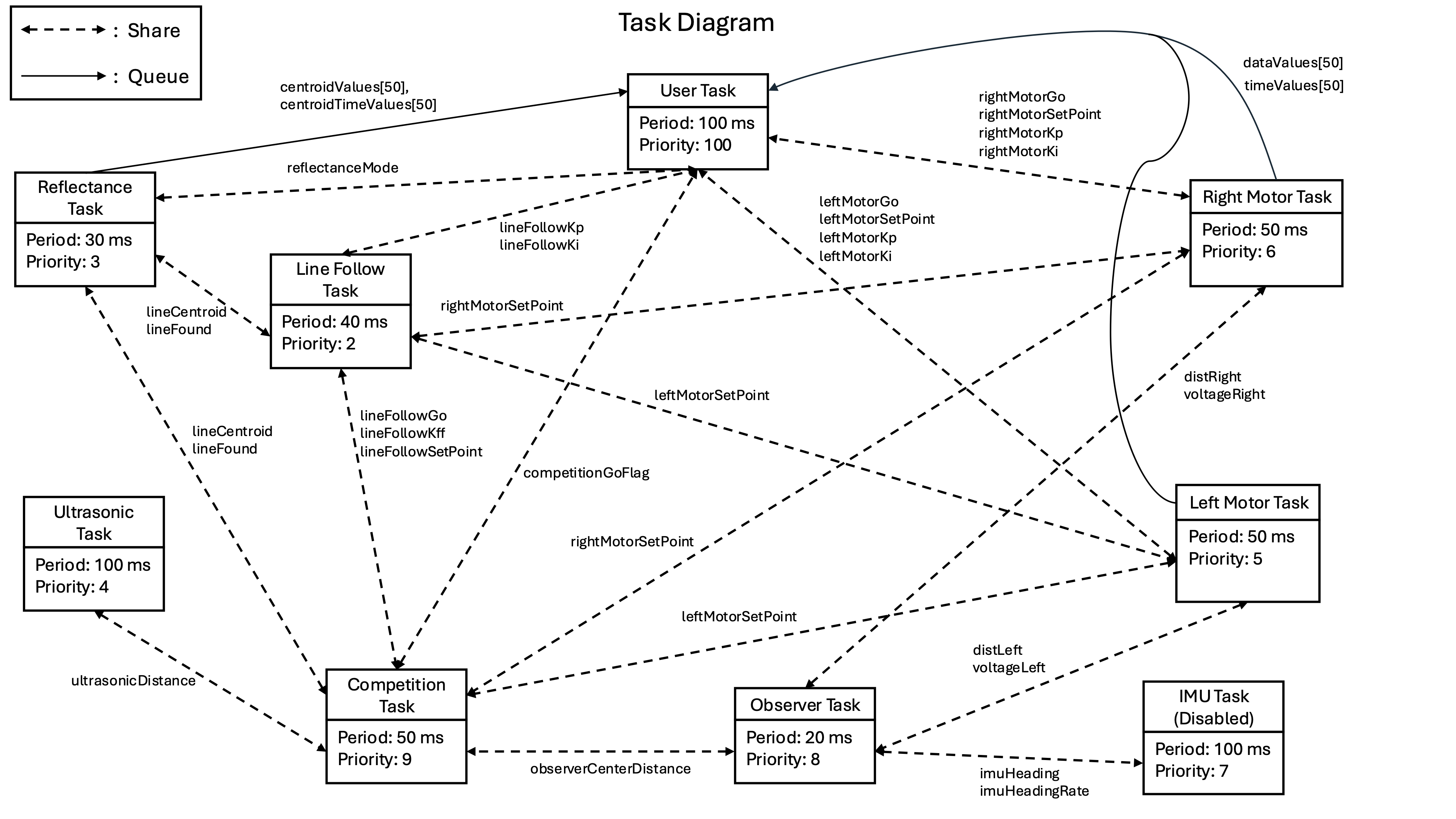

The robot runs nine concurrent tasks under a cooperative round-robin scheduler

(cotask). Tasks communicate exclusively through

shared variables and queues (task_share),

with no direct function calls between tasks.

Task Summary¶

Task |

Period |

Priority |

Role |

|---|---|---|---|

|

100 ms |

100 |

Serial UI, command dispatch |

|

50 ms |

5 |

PI velocity control, left wheel |

|

50 ms |

6 |

PI velocity control, right wheel |

|

30 ms |

3 |

Line sensor calibration & centroid |

|

40 ms |

2 |

Steering PI + feed-forward |

|

100 ms |

7 |

Heading & heading-rate (disabled at competition) |

|

100 ms |

4 |

Distance measurement |

|

20 ms |

8 |

Center distance estimate |

|

50 ms |

9 |

Course sequencer (15-state FSM) |

Control Scheme¶

Motor Velocity Control¶

The discrete-time PI controller (implemented in controller.py) computes:

with conditional anti-windup: the integrator is held constant whenever the output is saturated and further integration would deepen the saturation. Output is clamped to ±100% duty cycle.

Tuned gains:

Parameter |

Value |

|---|---|

\(K_p\) |

0.15 |

\(K_i\) |

4.0 |

Saturation |

±100 % duty cycle |

Line-Following Controller¶

The line-following controller steers the robot by applying a differential speed correction proportional to the centroid error:

where \(u_{LF}\) is the PI controller output driven by the reflectance centroid (range −1 to +1, where 0 = centered on line). The actuator gain scales the correction to mm/s using the effective wheel-to-wheel width of 141 mm.

A feed-forward term assists with curved track segments:

Tuned gains:

Parameter |

Value |

Notes |

|---|---|---|

\(K_p\) |

0.40 |

Steering proportional gain |

\(K_i\) |

0.30 |

Integral anti-drift |

\(K_{ff}\) (straight) |

0.0 |

No feed-forward on straights |

\(K_{ff}\) (tight curve, R125) |

0.6 |

Aggressive assist |

\(K_{ff}\) (gentle curve) |

0.3 |

Moderate assist |

Saturation |

±100 mm/s correction |

State Observer¶

A discrete-time Luenberger observer was designed offline for the robot’s linearized model. The state vector is:

with the update equations:

The observer task runs at 20 ms to match the model’s discretization period. In competition, the full observer was replaced with a simple wheel-average estimator for reliability:

Algorithms & Design Decisions¶

Cooperative Multitasking¶

Each task is a Python generator function that calls yield at least once per iteration, returning control to the cotask scheduler. The scheduler runs each task at its configured period using priority-based round-robin scheduling. Inter-task data is exchanged through 41 Share objects (single-value, interrupt-safe) and 4 50-element Queue buffers for time-series logging. Configuration (PI gains, IMU calibration offsets) is persisted to gains.json and imu.json on the MCU filesystem so settings survive power cycles.

Reflectance Centroid Calculation¶

Each of the 7 IR sensors is first normalized using a two-point calibration:

where \(r_i\) is the averaged raw ADC reading (averaged over 5 reads per cycle to reduce noise), and \(r_{dark,i}\), \(r_{light,i}\) are per-sensor dark and light reference values captured during calibration. The result is 0 for a white surface and 1 for the black line.

The line position is then computed as a weighted centroid:

\(C\) ranges from −3 (line at far left) to +3 (line at far right), with 0 meaning centered. For the line-following controller the output is divided by 3 to normalize to [−1, +1].

Line-loss detection uses the sum of calibrated values \(S = \sum v_i\). If \(S < 1.0\) (all sensors read white — line not found) or \(S > 6.85\) (all sensors read black — sensor off track), the line is considered lost and the last valid centroid is returned instead.

Calibration the robot is placed on a light surface, then a dark surface, and each phase collects 100 samples at 10 ms intervals. Per-sensor averages are stored to ir_calibration.json on the MCU filesystem and reloaded on startup.